Examiner-Ready Output. Every Time.

AI Agent Reports generate structured, citation-backed, audit-traceable documents from every agent run — designed for compliance teams, auditors, senior management, and regulatory examiners. Not summaries. Governed documents

Governed Documents. Not AI Narratives.

AI Agent Reports are not summaries or chatbot outputs. They are governed documents tied directly to submitted evidence, produced within deterministic execution specifications, and structured to meet regulatory and examiner expectations. Every finding is traceable to a specific source document and reviewer action

Agent-tied

Linked to a specific role-configured agent

Evidence-grounded

Every finding traced to source docs

Deterministic

Produced within governed specifications

Audit-preserved

Full trail of inputs and actions

REPORT TYPES

Structured Output for Every Review Function

Every time an AI Agent executes a workflow, it produces one of the following structured report types.

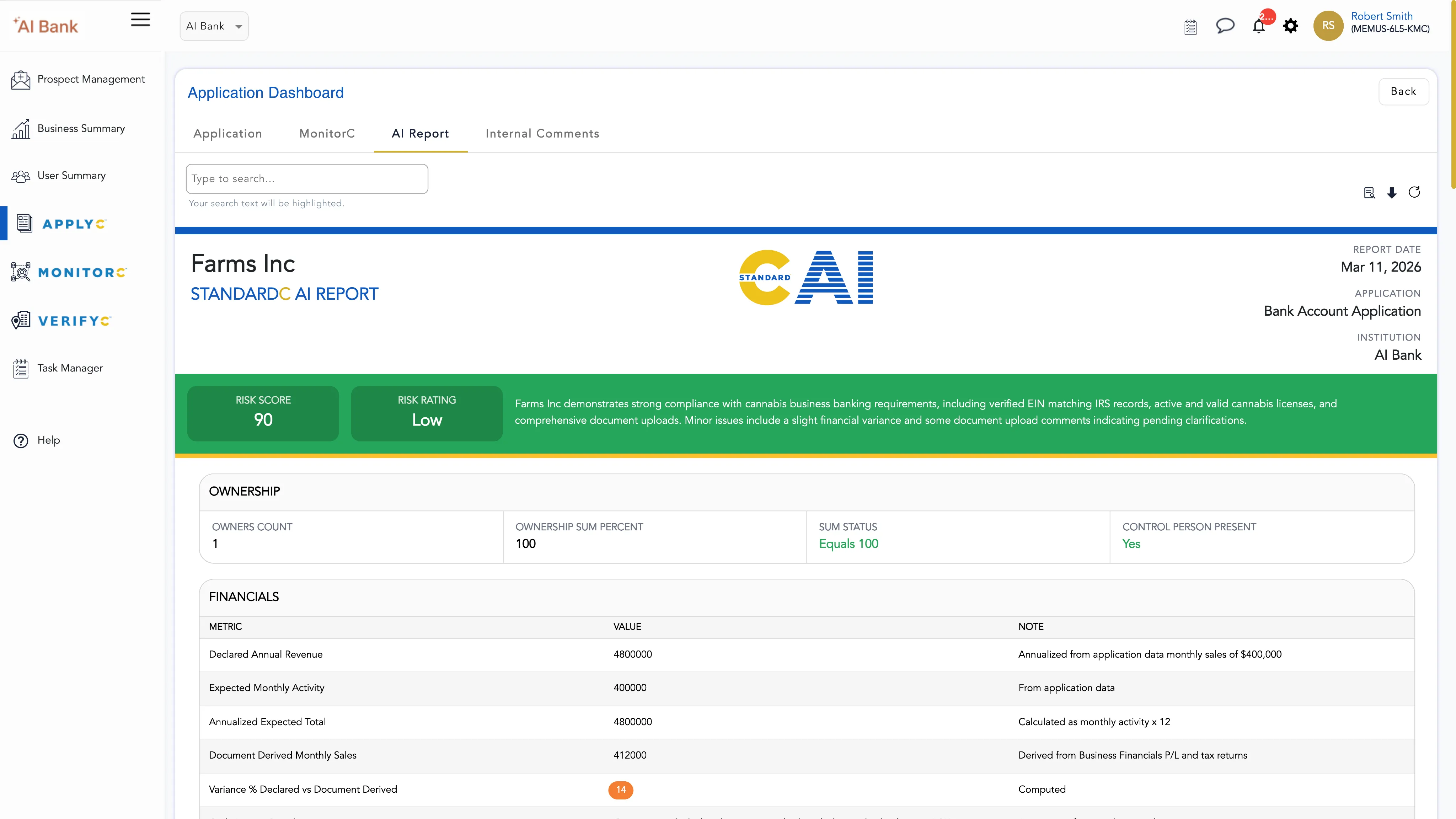

Onboarding Review Reports

Structured summaries of application completeness, identity reconciliation, and beneficial ownership findings — with citations to submitted documentation.

BSA/AML Analysis Reports

Transaction analysis summaries with typology flags, threshold citations, and SAR consideration documentation — traceable to specific transaction records.

Underwriting Review Reports

Credit file findings with DSCR validation, covenant analysis, and exception identification — mapped directly to submitted financial statements and loan documentation.

Periodic Review Reports

Due diligence refresh outputs with variance tracking and risk signal updates, comparing current findings against prior review periods with citation continuity.

Audit Workpapers

Structured documentation of control testing, policy adherence findings, and exception handling — formatted for internal audit and regulatory workpaper requirements.

Examiner-Ready Packages

Compiled output sets formatted for regulatory examination and board reporting — structured to meet examiner documentation expectations without manual preparation.

Defensible Institutional Documentation

REPORT GENERATION PROCESS

Validated Before Storage. Defensible by Design.

The report generation pipeline enforces quality through structured validation — outputs that don't meet schema requirements, citation standards, or evidence-grounding constraints are automatically rejected and regenerated under the same fixed parameters.

Schema Validation

Every output is validated against a required JSON schema — all required fields must be present and correctly structured.

Citation Grounding Verification

Every finding must be mapped to a specific source document in the case file. Unsupported inference is rejected at validation.

Evidence-Grounding Requirements

The system verifies that all conclusions are grounded in submitted evidence — not hallucinated, inferred, or fabricated.

Automatic Rejection & Rerun

Nonconforming outputs are rejected and rerun under identical fixed parameters until a conforming output is produced. Only validated outputs are stored.

See AI Agent Reports in Action

Review sample outputs and see how structured, citation-backed reporting transforms your review workflows.

.webp)